Most transcription tools take the obvious path: download the audio, run Whisper, wait 2–5 minutes. We found a better way.

The Insight: YouTube Already Has the Transcript

About 80% of the YouTube videos people want to transcribe already have captions — either uploaded by the creator or auto-generated by YouTube. These captions are essentially a pre-computed transcript, accurate and instant to retrieve.

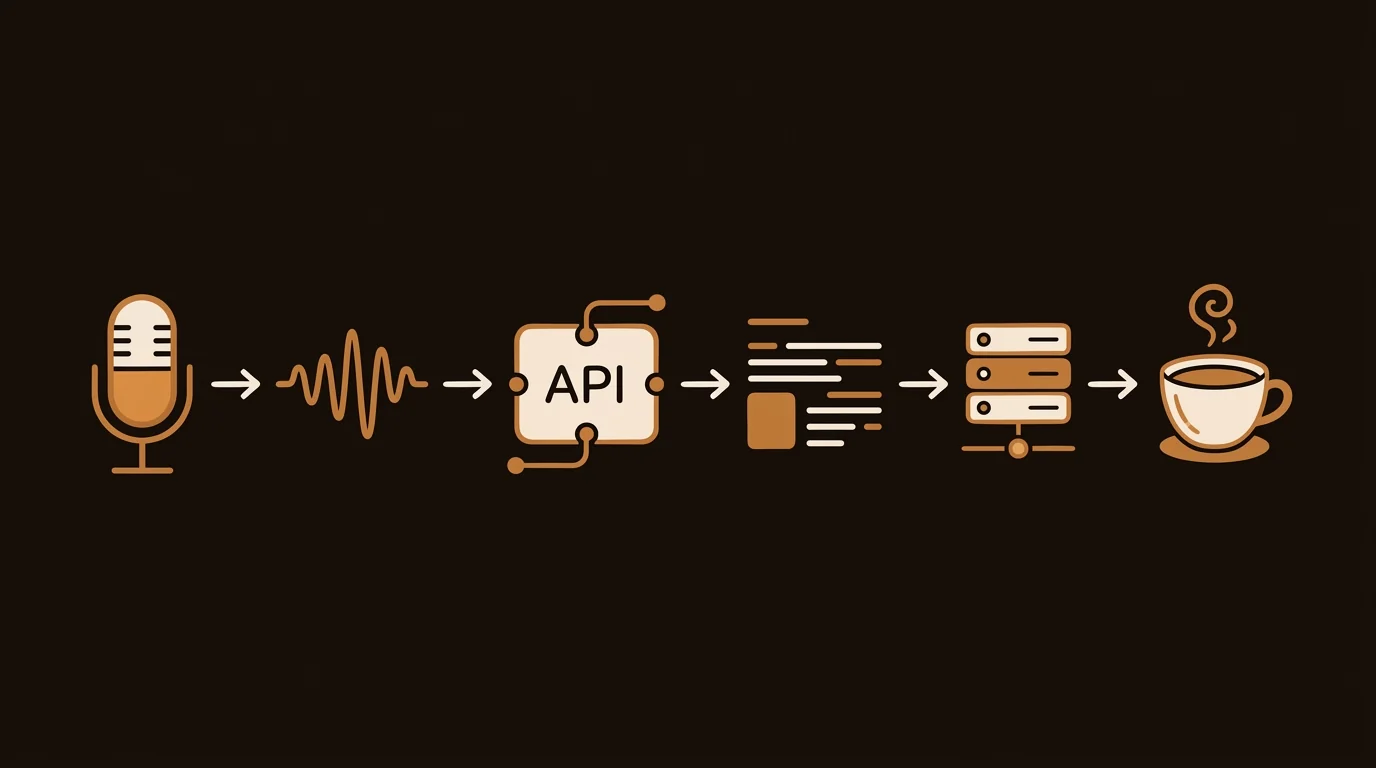

So we built a two-stage pipeline: first, try to pull the existing YouTube captions. If they exist, use them and skip the audio entirely. If they don't exist, fall back to Whisper.

The Result: 10x Faster for the Common Case

For the 80% of videos with captions, transcription is near-instant — typically under 5 seconds. For the 20% without, we run Faster-Whisper and the user waits a minute or two. The overall average is dramatically faster than the naive approach.

Post-Processing with an LLM

Raw YouTube auto-captions are noisy: no punctuation, inconsistent capitalization, "um" and "uh" everywhere. After extraction, we pipe the raw text through an LLM to clean it up: add sentence structure, fix proper nouns, and remove filler words.

This step adds a second or two but makes the output dramatically more readable — and much better input for downstream summarization.

Across 8+ years, I've built full-stack and platform systems using TypeScript, Node, React, Java, AWS, and Azure, applying AI to practical problems and turning ambitious ideas into shipped products.