The Claude Code leak created a lot of attention. Most commentary focused on the leak itself. The more valuable question is what this tells us about how serious AI products should be built.

At sipsip.ai, we build practical AI workflows around long-form content, transcription, and summarization. That gives us a clear lens on what this incident means for product architecture and operational security.

Claude Code Leak Lessons in 60 Seconds

If you searched for Claude Code leak lessons, this is the condensed takeaway:

- AI products must be built as execution systems, not chatbot wrappers.

- Source map security is a release engineering requirement, not an optional check.

- Trust comes from system quality: permissions, observability, and predictable recovery.

- Product teams that harden architecture now will move faster with lower risk later.

What This Incident Really Signals

The Claude Code leak is not only a packaging mistake story. It is also a snapshot of category maturity.

In 2026, competitive AI products are no longer “prompt in, answer out.” They are full execution systems with:

- session state and context management

- tool orchestration and retries

- external integrations

- policy and permission controls

- remote or asynchronous execution paths

When a product reaches that complexity, release hygiene and data security become first-order product concerns, not compliance afterthoughts.

Architecture Lessons for AI Product Teams

1) Execution Architecture Matters More Than Prompt Tricks

A model can be excellent and still fail users if the runtime around it is weak.

The reliable products in this category usually have:

- deterministic execution boundaries

- strong tool-call error handling

- predictable fallback behavior

- observability around long-running jobs

This is why architecture wins over prompt cleverness over time.

2) Context Strategy Is a Core Capability

Long sessions create hidden failure modes: token overflow, inconsistent memory, and cost spikes.

If your product handles multi-step tasks, you need explicit policies for:

- context compaction

- summary checkpoints

- token and cost budgets

- safe continuation after partial failure

Without this, quality degrades exactly when workflows become valuable.

3) Integrations Need Product-Grade Reliability

As AI products connect to more tools and systems, integration reliability becomes a primary UX driver.

Users do not care whether a failure came from the model, the tool layer, auth, or transport. They only see that the workflow broke.

Treat integrations as core product surfaces with:

- typed interfaces

- auth/session lifecycle handling

- standardized retries and timeouts

- clear user-facing failure states

4) Security Defaults Must Be Built Into Runtime Design

For modern AI tools, security is not just encryption at rest and TLS in transit.

Security depends on runtime design decisions such as:

- what the agent can read and execute

- how permissions are granted and audited

- how secrets are scoped and rotated

- how artifacts are packaged and shipped

The release pipeline is part of the threat model.

What We Apply at sipsip.ai

The Claude Code incident reinforces choices we already treat as non-negotiable.

1. Separation of Concerns in the Stack

Our stack is explicitly split into:

- frontend interaction layer (Next.js)

- processing/runtime services (transcription + summarization pipeline)

- managed data layer (Supabase schema, policies, functions)

This keeps blast radius smaller and makes policy enforcement easier at each layer.

2. Controlled Processing Pipeline

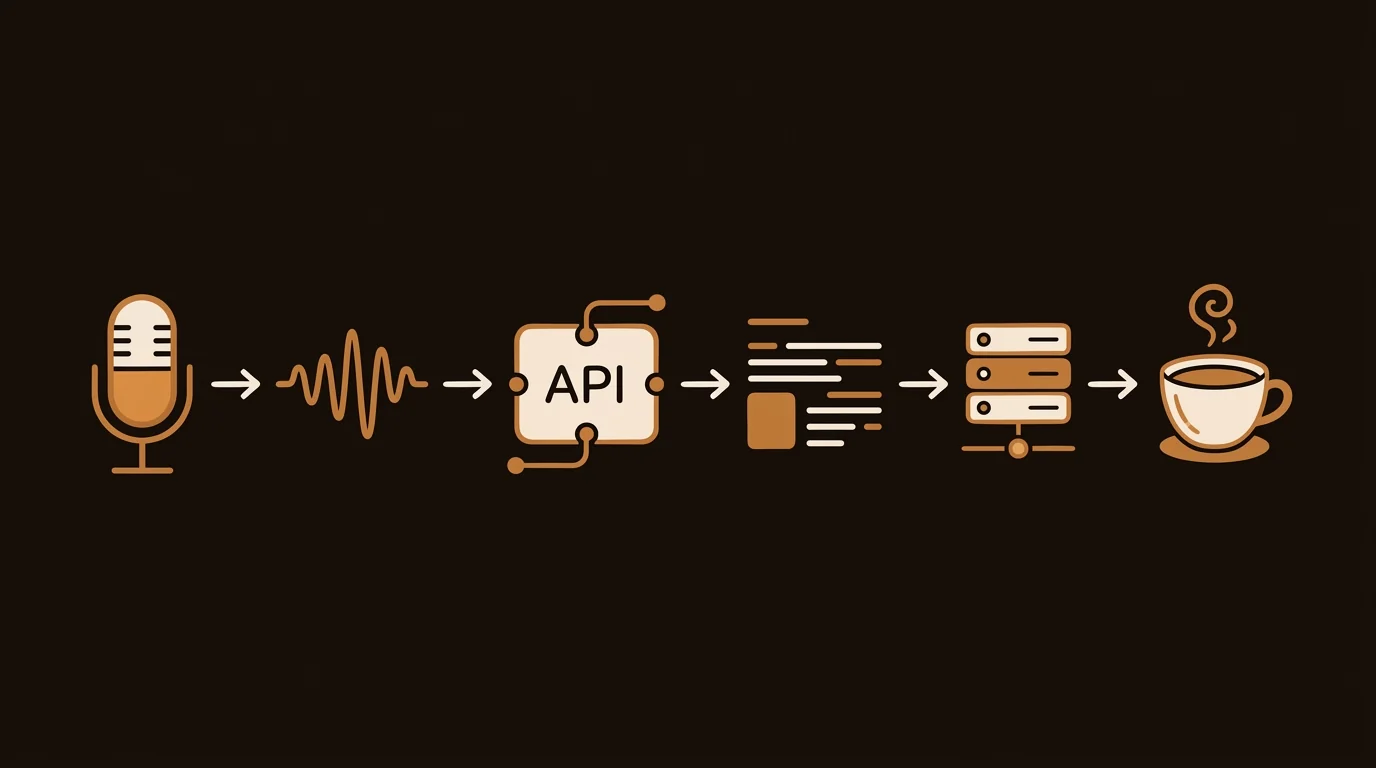

For transcription and summarization workflows, we use structured pipeline stages instead of opaque monolithic requests.

That allows us to enforce:

- per-stage validation

- audit events on state transitions

- controlled callbacks and finalization paths

- deterministic retry behavior

In practice, this reduces silent failure and improves incident response speed.

3. Data Security Controls We Prioritize

We focus on concrete controls that matter in day-to-day operations:

- strict environment secret separation by environment

- service-role usage constrained to server-side paths

- explicit auth checks before tool actions

- auditable job events for critical pipeline steps

- least-privilege data access patterns wherever possible

No single control is enough. Security comes from layered constraints.

4. Release Hygiene as Security Hygiene

The source map lesson applies broadly to every JavaScript product team.

Practical controls we recommend:

- CI gate that fails builds when production maps include embedded source content

- package allowlist (

files) to minimize published artifacts - pre-publish package inspection in CI, not only local machines

- artifact retention for traceability and rollback investigation

These are low-cost controls with very high downside protection.

Future Direction: Where AI Products Are Going

Based on current engineering trajectories, the next phase of AI products will likely be defined by three capabilities.

1. Orchestration Over Single-Turn Intelligence

Products that can decompose tasks, coordinate steps, and recover from failure will outperform products optimized only for one-shot answers.

2. Policy-Governed Automation

Enterprise adoption will reward systems that expose clear, enforceable rules for what automation can and cannot do.

3. Trust Through Operational Quality

Users trust AI products when behavior is predictable under pressure:

- failures are explicit

- retries are bounded

- data boundaries are respected

- incident handling is fast and transparent

In other words, reliability and security become part of product UX.

Action Checklist for Founders and Engineering Leads

If you build AI workflows, do these this quarter:

- Add automated sourcemap and artifact checks to release CI.

- Document your runtime permission model and enforce it in code.

- Add stage-level audit events for critical workflows.

- Verify secret scoping and rotation policies by environment.

- Test long-session behavior under token and timeout pressure.

These are pragmatic moves that improve both product quality and risk posture.

For adjacent implementation patterns, see:

- From the Claude Code Leak: Architecture Breakdown and Future Direction

- How AI Video Summarizers Work

- YouTube Video Summarizer API

Final Take

The most important takeaway from the Claude Code leak lessons is simple:

AI product advantage is moving from raw model quality to system quality.

That means architecture, orchestration, and data security are not side topics. They are the product.

Frequently Asked Questions

What is the biggest product lesson from the Claude Code leak?

The key lesson is that modern AI tools are execution systems, not just chat interfaces. Product quality now depends on orchestration, permissions, integration reliability, and security controls as much as model quality.

How should startups respond to source map leak risk?

Treat release artifacts as a security boundary: enforce CI checks for sourcemap policies, limit published files to strict allowlists, and validate production packages before publish.

How do you improve AI product security after a source map incident?

Start with release controls (artifact scanning and publish allowlists), then harden runtime boundaries (permissions, secret scoping, audit events, and least-privilege data access).

Across 8+ years, I've built full-stack and platform systems using TypeScript, Node, React, Java, AWS, and Azure, applying AI to practical problems and turning ambitious ideas into shipped products.