Word error rate (WER) is the standard metric for speech-to-text accuracy — the percentage of words the model transcribes incorrectly. The leading models in 2026 achieve under 5% WER on clean professional audio, but real-world performance varies by a factor of 3–4x depending on recording conditions, vocabulary domain, and model architecture. Here's what the benchmarks actually show — and what determines whether you hit 95% or 75% accuracy on your recordings.

Two Different Problems Called "Speech to Text"

The term covers two distinct use cases that work differently under the hood:

1. Live dictation: You speak, text appears immediately. Used for voice typing in Google Docs, Siri, Cortana, or accessibility tools. Optimized for low latency — results appear in under a second because they're processed in small audio chunks.

2. Audio file transcription: You have a complete recording; you want a complete transcript. Used for interviews, meetings, podcasts, lectures. Optimized for accuracy — the model can see the full audio context before generating output, which meaningfully improves results.

At sipsip.ai, we focus on the second use case: transcribing audio and video files you've already recorded, where accuracy matters more than real-time speed.

How Modern ASR Models Work

The current generation of speech-to-text AI is built on transformer architectures — the same foundational design as large language models, but applied to audio rather than text.

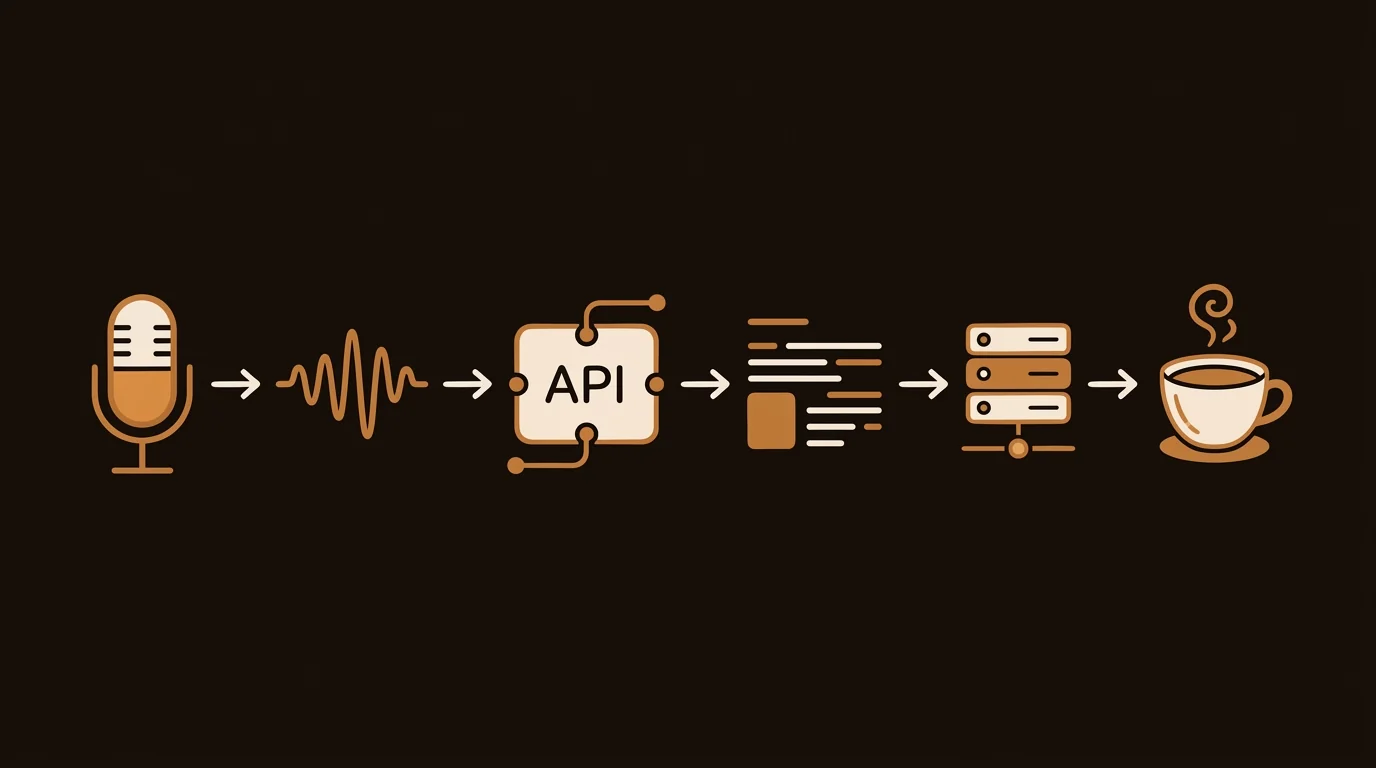

The core pipeline:

1. Audio preprocessing The raw audio waveform is converted into a mel spectrogram: a 2D representation where the x-axis is time, the y-axis is frequency, and pixel brightness represents energy at that frequency/time point. This is what the model actually "sees."

2. Encoder A transformer encoder processes the spectrogram and builds a rich internal representation of the audio — capturing phonemes, prosody, rhythm, and context across the full sequence.

3. Decoder A transformer decoder generates the text output token by token, using both the encoder's audio representation and the text generated so far. This auto-regressive process is what allows the model to maintain context across long sentences and paragraphs.

4. Post-processing Raw model output is typically all-lowercase with no punctuation. Post-processing adds capitalization, punctuation, and formatting — either with a separate language model or built into the decoder training.

The key architectural leap that made modern ASR dramatically better: large-scale pre-training on diverse audio. OpenAI's Whisper was trained on 680,000 hours of multilingual audio — orders of magnitude more than previous models — which is why it generalizes well to accents, languages, and audio conditions that earlier systems failed on.

Whisper vs. Deepgram: The Two Dominant Approaches

The current ASR landscape is effectively split between two paradigms:

OpenAI Whisper (open-source, used by sipsip.ai for uploaded files)

- Trained on 680k hours of multilingual data

- Available in five model sizes (tiny → large-v3)

- Exceptional multilingual performance — 99 languages

- Best-in-class on accented English and domain-specific vocabulary

- Slower than real-time on CPU; GPU deployment required for production speed

- Word Error Rate on standard benchmarks: ~3–5% (large-v3)

Deepgram Nova-2 (commercial API)

- Purpose-built for production deployment: fast, streaming-capable

- Better speaker diarization (multi-speaker labeling) than Whisper

- Lower latency for live applications

- According to Deepgram's benchmarks, Nova-2 achieves sub-10% WER on professional audio

- Used by sipsip.ai for meeting recordings where speaker separation matters

Neither is universally better. Whisper wins on multilingual and accented speech. Nova-2 wins on real-time requirements and multi-speaker audio.

What Determines Transcription Accuracy

Model choice matters less than most people expect. The factors that actually drive accuracy, in order of impact:

1. Recording quality (biggest factor) Clear speech, minimal background noise, and good microphone placement account for the majority of accuracy variance. A $50 USB microphone in a quiet room outperforms a $2,000 microphone in a noisy open-plan office.

2. Speech clarity Fast speech, overlapping speakers, heavy accents, or mumbling all reduce accuracy. Articulate speech at a natural pace consistently outperforms attempts to "speak for the microphone."

3. Domain vocabulary General ASR models are trained on general speech. Technical terms — medical nomenclature, legal language, engineering jargon, brand names — may be misrecognized. Purpose-built medical and legal ASR models are available, but general models (especially Whisper large-v3) have improved significantly on technical vocabulary.

4. Audio encoding High-bitrate audio (WAV, FLAC, high-quality MP3) transcribes more accurately than heavily compressed audio (phone call codecs, low-bitrate MP3). If you're recording for transcription, export at 128kbps or higher.

WER Benchmarks by Recording Type

The same model produces dramatically different WER depending on recording conditions. From our processing of audio at sipsip.ai:

| Recording Type | Whisper large-v3 WER | Deepgram Nova-2 WER | Notes |

|---|---|---|---|

| Studio/quiet room, single speaker | 3–5% | 4–7% | Near-human accuracy |

| Professional interview, mic'd | 5–8% | 5–8% | Minimal editing needed |

| Field recording, ambient noise | 10–18% | 12–20% | Noise reduction helps |

| Phone/VoIP recording | 12–22% | 8–15% | Nova-2 handles codec artifacts better |

| Multi-speaker meeting | 15–25% | 8–15% | Nova-2 diarization advantage |

| Accented English, non-native speaker | 8–15% | 12–20% | Whisper multilingual training advantage |

| Technical domain vocabulary | 8–15% | 10–18% | Domain-specific models reduce this significantly |

Note: WER numbers are approximate and vary by specific audio. These reflect averages across production volume at sipsip.ai.

How to Maximize Transcription Accuracy

The biggest gains in WER come from controllable factors — not from switching models:

1. Record at 16kHz or higher. Most ASR models downsample to 16kHz internally. Recording above this provides no additional accuracy, but recording below it (phone calls, compressed audio) noticeably degrades output.

2. Reduce background noise at the source. A directional microphone or noise-canceling headset in a quiet room consistently outperforms expensive post-processing on a noisy recording. The model can't recover speech that was masked during capture.

3. Speak at a natural pace. Both deliberate slowing and rushing increase WER. Natural conversational pace — roughly 130–150 words per minute — is what most models are optimized for.

4. Choose the right model for your content. Whisper large-v3 for multilingual, accented, or technically specialized content. Deepgram Nova-2 for meetings with multiple speakers or real-time requirements. The model gap narrows significantly with good audio quality — below 10% WER, both models produce output that requires similar editing effort.

5. Export at high bitrate. WAV or FLAC for archival recordings. MP3 at 128kbps minimum — lower bitrate compression introduces codec artifacts that look like background noise to the transcription model.

Speech-to-Text Tool Comparison: Which to Use in 2026

Knowing the model architecture is useful. Knowing which tool to actually use is more practical. Here's how the main options compare:

For file transcription (offline, non-real-time)

sipsip.ai Audio Transcriber — the most complete managed option. Upload MP3, MP4, WAV, or M4A; get a clean transcript with speaker labels, timestamps, an AI summary, and key points. Powered by Whisper large-v3 on the backend. 20 free credits, no credit card.

Whisper API (OpenAI) — direct API access at $0.006/min. Best for developers who want to control the output format. Batch-only; no streaming. 25MB file size limit per API call requires chunking for longer audio.

AssemblyAI — $0.012/min with speaker diarization, sentiment analysis, and auto-chapters available as add-ons. Better diarization quality than Whisper on 2-speaker interview content.

For real-time / live transcription

Deepgram Nova-2 streaming API — sub-300ms first-token latency. The standard choice for production real-time transcription. $0.0059/min for streaming.

Otter.ai — consumer-friendly real-time transcription for meetings. Shows live transcript during Google Meet, Zoom, and Teams calls. 300 minutes/month free.

Google Cloud STT v2 — best for GCP-native teams who need ecosystem integration over best-in-class accuracy.

For professional human-verified transcription

Rev — AI transcription at $0.02/min; human-verified at $1.25/min with 99%+ accuracy. Only justified for legal, medical, or archival content.

[UNIQUE INSIGHT] The real decision isn't model choice — it's pipeline choice. The same Whisper large-v3 model can produce transcripts ranging from 3% to 20% WER depending on whether you apply noise reduction before transcription, use a cleanup LLM pass after, and properly handle multi-speaker diarization. Most "which AI is best?" comparisons miss this: the tool wrapper matters as much as the underlying model.

Frequently Asked Questions

What is the most accurate speech-to-text AI in 2026?

For general use, OpenAI Whisper (large-v3) and Deepgram Nova-2 are the leading models. Whisper performs better on multilingual and accented speech; Deepgram is faster and performs better on real-time streaming and multi-speaker audio. Both significantly outperform older ASR services.

What is the difference between speech-to-text and transcription?

Speech-to-text refers to the underlying AI technology that converts spoken audio to text. Transcription is the output — the formatted text document. A transcription tool wraps speech-to-text AI with additional processing: punctuation, capitalization, timestamps, and formatting.

Can speech-to-text handle multiple speakers?

Yes — this is called speaker diarization. Models like Deepgram Nova-2 label speakers as "Speaker 1", "Speaker 2", etc. Accuracy is good for 2–4 speakers in a clean environment; it degrades with overlapping speech or more than 5 speakers.

How does audio quality affect speech-to-text accuracy?

Audio quality is the single biggest accuracy factor. A clear recording at 16kHz sample rate in a quiet room yields 95%+ accuracy. Background noise, room echo, or low-bitrate compression can drop accuracy to 70–80%.

Is speech-to-text accurate enough for professional use?

Yes, for most use cases. Modern ASR achieves word error rates under 5% on clean professional audio — comparable to human transcription speed without the cost. For legally sensitive documents or content requiring 100% accuracy, human review of the AI output is still recommended.

How much does speech-to-text API transcription cost in 2026?

Deepgram Nova-2 runs at $0.0043/min ($0.26/hour) for batch transcription. OpenAI Whisper API is $0.006/min ($0.36/hour). AssemblyAI is $0.012/min (~$0.72/hour). Self-hosted Whisper on a GPU costs roughly $0.002/min at current spot prices. For end-users (not developers), sipsip.ai's managed transcription is available on a credit plan starting with 20 free credits.

Across 8+ years, I've built full-stack and platform systems using TypeScript, Node, React, Java, AWS, and Azure, applying AI to practical problems and turning ambitious ideas into shipped products.