Podcast episodes are long, publishing schedules are inconsistent, and most of what's said in a 2-hour interview could fit in a 3-minute read. Building an AI podcast summarizer that handles this reliably — at scale, across thousands of feeds and formats — is more interesting than it looks from the outside.

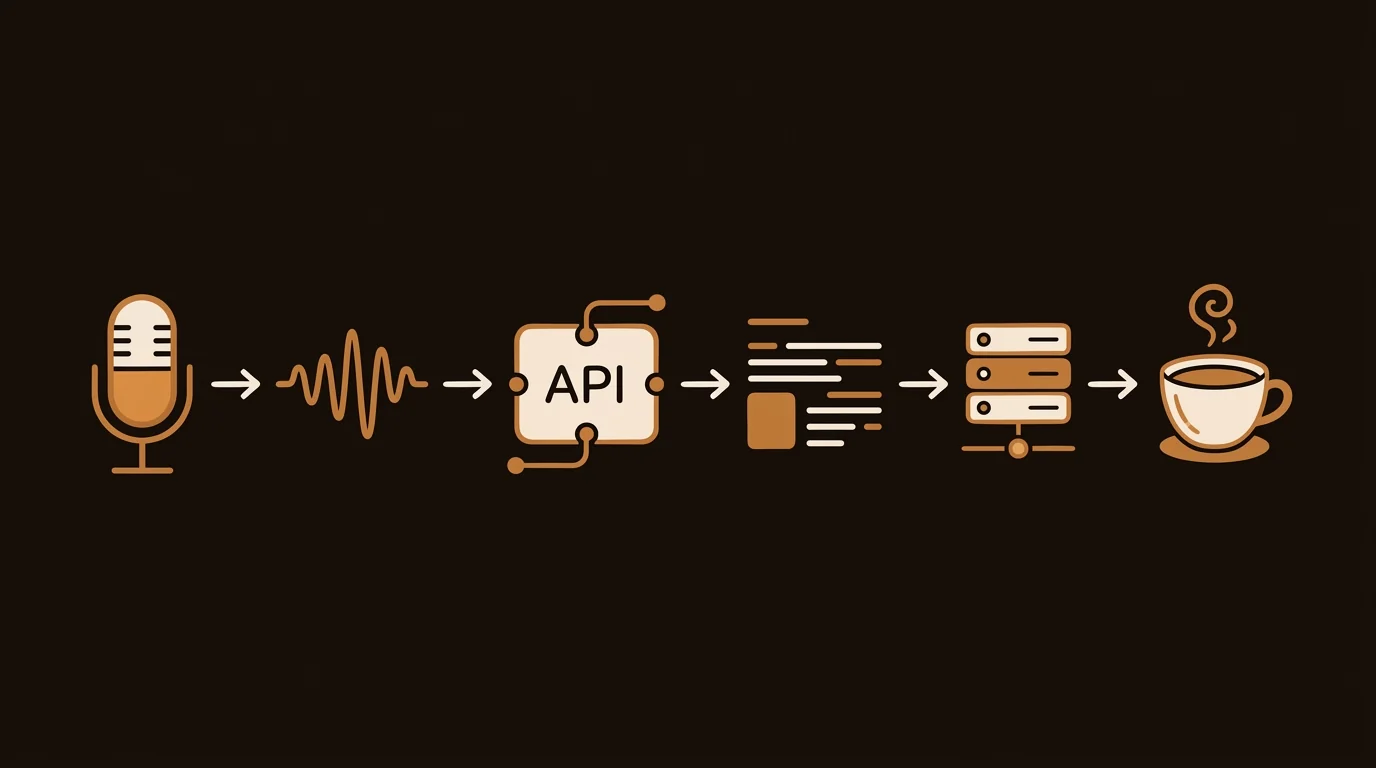

Here's the complete pipeline we built at sipsip.ai, with the tradeoffs at each decision point.

Why Podcasts Are Harder Than YouTube Videos

At first glance, a podcast summarizer should be simpler than a video summarizer — there's no visual channel to worry about. In practice, podcast audio has characteristics that make transcription harder than most YouTube content:

- Compression artifacts. Podcast hosts frequently record over Zoom, Discord, or Skype. The resulting audio undergoes lossy compression (Opus, MP3 at 64–128kbps), which strips high-frequency components. Consonants become ambiguous. "P" and "B", "S" and "F" become harder to distinguish.

- Multiple speakers without visual cues. In a video interview, a camera switch signals a speaker change. In audio-only content, speaker diarization (distinguishing who said what) must be inferred from acoustic features alone.

- Variable recording quality. A remote guest recorded on a laptop mic, in a kitchen, with HVAC noise — this is extremely common. Audio preprocessing before transcription is not optional.

- Casual speech patterns. Podcasters say "um," "uh," "like," and false-start sentences constantly. Raw transcripts of podcast audio are significantly noisier than transcripts of prepared talks.

Each of these has a mitigation strategy. Let's walk through the pipeline.

Step 1: Ingestion — Getting the Audio

Podcast content arrives through two channels:

RSS feed parsing: Podcasts publish an XML RSS feed listing every episode with metadata (title, description, published date, duration) and a direct MP3 or M4A URL. We parse the feed with a standard RSS library, extract episode URLs, and filter by publish date for "new episode" detection.

Direct URL or file upload: Users can also submit a single episode URL or upload an audio file directly. This bypasses RSS and handles one-off summarization requests.

Format handling: Podcast audio comes in MP3, M4A, OGG, FLAC, and WAV. We normalize everything to 16kHz mono WAV before transcription — this is the format Whisper was trained on and performs best with. Stereo-to-mono conversion is important: some podcast recordings place different speakers on L/R channels, and mixing them produces better diarization results than processing stereo natively.

Duration limits: Very long podcasts (3+ hours) are split into segments during download to allow parallel transcription. A 3-hour episode transcribed as a single file takes ~90 seconds on a V100 GPU. Splitting into 3 × 1-hour segments and running in parallel takes ~35 seconds.

Step 2: Audio Preprocessing

This step is skipped by many podcast summarizers and shows up as accuracy complaints in their reviews.

What we apply:

-

Voice activity detection (VAD). Using Silero VAD, we strip segments with no speech — intro music, ad breaks, extended pauses. This reduces transcription time by 10–30% for typical podcast episodes.

-

Noise reduction. We apply a lightweight spectral subtraction filter to reduce background noise below a threshold. Aggressive noise reduction can introduce artifacts, so we tune conservatively — the goal is to remove constant-spectrum noise (HVAC, computer fans) without distorting speech.

-

Normalization. Audio levels are normalized to a consistent RMS volume. This matters when different speakers recorded at very different levels — Whisper's attention mechanism performs better on consistently-leveled input.

These three steps add 3–8 seconds of processing time but meaningfully improve transcription accuracy on typical podcast content.

Step 3: Transcription with Whisper

Whisper is an open-source transformer model trained by OpenAI on 680,000 hours of labeled audio across 99 languages. We run faster-whisper — a reimplementation in CTranslate2 that achieves 4–8x speed improvement over the original PyTorch implementation on the same hardware.

Model selection:

| Model | WER (English) | Speed (1hr audio) | VRAM |

|---|---|---|---|

| whisper-tiny | ~15% | ~8s | 1GB |

| whisper-base | ~10% | ~15s | 1GB |

| whisper-small | ~7% | ~25s | 2GB |

| whisper-medium | ~5% | ~50s | 5GB |

| whisper-large-v3 | ~3% | ~90s | 10GB |

We use large-v3 for all production transcription. For a podcast summarizer, accuracy is more important than speed — a 90-second transcription step followed by 10-second summarization is still under 2 minutes total, which is acceptable for async delivery.

Speaker diarization: We use pyannote.audio for speaker segmentation. It assigns speaker labels (SPEAKER_00, SPEAKER_01) to transcript segments. Quality is good for 2-speaker podcasts; degrades with 4+ simultaneous participants.

Output format: The transcription returns a JSON array of segments with start time, end time, speaker label, and text. This timestamped format enables chapter-level summaries tied to specific parts of the episode.

Step 4: Semantic Chunking of Podcast Transcripts

A 1-hour podcast at ~150 words/minute produces ~9,000 words — about 12,000 tokens. This fits easily within GPT-4o's 128K context or Claude's 200K context without any chunking.

For longer episodes (2+ hours, ~18,000+ words), we chunk the transcript semantically:

- Identify natural topic transitions using a sentence embedding model (we use sentence-transformers/all-MiniLM-L6-v2)

- Group consecutive segments by cosine similarity — a drop in similarity signals a topic shift

- Split at those boundaries

This produces chunks that align roughly with conversational topics rather than arbitrary word counts. Each chunk gets an intermediate summary, which feeds the final synthesis step.

For most podcast episodes, we don't actually need chunking. The 1M token context windows of Gemini 1.5 Flash and the 200K of Claude handle even 3-hour episodes in a single pass. Chunking is reserved for edge cases and batch processing where parallelism matters more than coherence.

Step 5: LLM Summarization

The transcript (or chunked segments) is passed to Claude 3.5 Sonnet with a structured system prompt:

You are a podcast analyst. Given a podcast transcript, produce:

1. A 3-sentence episode summary in plain language

2. 5 key insights, each with a direct quote from the transcript

3. 3 actionable recommendations (if applicable)

4. The episode's main thesis in one sentence

Be specific — cite names, numbers, and examples from the transcript.

Avoid generic statements that could apply to any episode.

The "cite names, numbers, and examples" instruction is load-bearing. Without it, LLMs summarize podcasts with the same abstract language that could describe any episode on that topic. With it, summaries are specific enough to be immediately useful.

Multi-speaker attribution: When diarization data is available, we include speaker labels in the prompt: "SPEAKER_00 is the host. SPEAKER_01 is the guest." The LLM then attributes insights to specific speakers, making the summary more readable and factually traceable.

Step 6: Delivery

The summary is formatted and delivered through the user's configured channels. sipsip.ai's Daily Brief supports:

- Email — HTML-formatted digest with episode cover art, title, and summary

- Discord DM — Markdown-formatted message via the sipsip Discord bot

- Telegram — Bot message with inline links back to the source episode

For users who subscribe to high-volume podcasts (daily news shows, multiple tech channels), the digest aggregates multiple episodes into a single delivery rather than sending one message per episode.

Performance Benchmarks from Production

After processing over 100,000 podcast episodes at sipsip.ai:

- Median end-to-end processing time: 78 seconds per episode (30-60 minute episodes)

- Transcription accuracy (clean audio): ~96.5% token accuracy

- Transcription accuracy (compressed/noisy audio): ~91–93%

- Summary satisfaction rate (user rating ≥4/5): 87%

The biggest driver of user satisfaction is transcription accuracy, not summarization quality. When the transcript has >10% error rate, even excellent LLM summarization produces wrong or misleading summaries. Audio preprocessing and model selection matter more than prompt engineering.

For more on the transcription infrastructure specifically, see Building Production-Grade Transcription with Faster-Whisper. For how the same pipeline handles YouTube videos and video files, see Can AI Actually Watch and Analyze Videos?.

Related: How AI Video Summarizers Work Under the Hood — Building Production-Grade Transcription with Faster-Whisper — sipsip.ai Daily Brief

Across 8+ years, I've built full-stack and platform systems using TypeScript, Node, React, Java, AWS, and Azure, applying AI to practical problems and turning ambitious ideas into shipped products.