When someone asks ChatGPT "what do you know about [person]?" and gets a confident-sounding paragraph back, there's a real risk that some of it is fabricated. The model generates plausible text based on training data — and sometimes that text describes things that didn't happen.

This is the hallucination problem. And for background checks — where decisions about hiring, investment, and trust depend on accuracy — it's not a minor issue. It's a fundamental failure mode.

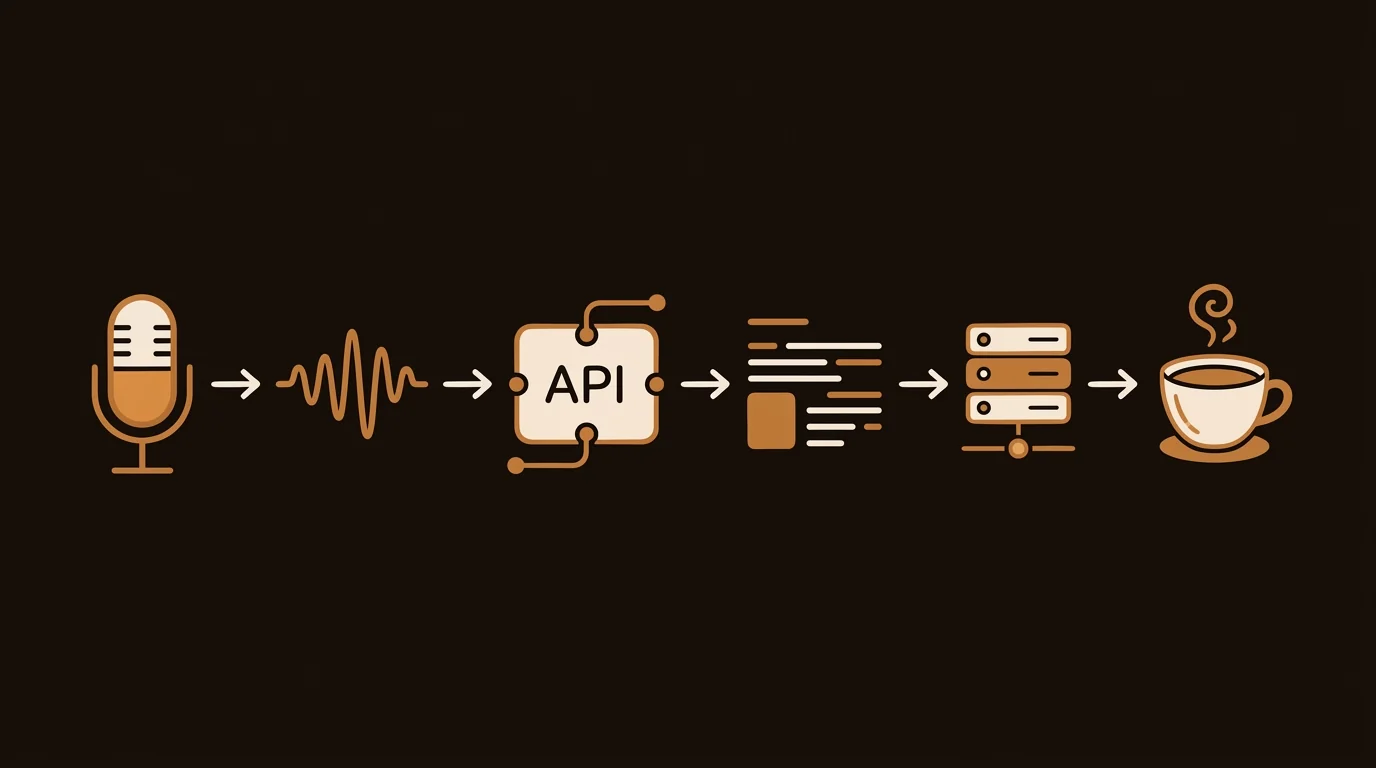

At sipsip.ai, we built AI Investigator specifically to solve this. The architecture is designed around one principle: don't generate claims, retrieve them. Here's how the pipeline works.

The Hallucination Problem in General-Purpose AI Research

General-purpose LLMs like GPT-4 or Claude are trained on large datasets of text. When you ask them about a specific person, they return text that's statistically consistent with their training data. That's different from returning accurate information about that person.

The problems this creates for background research:

Knowledge cutoffs. Training data has a cutoff date. A company that changed leadership six months ago, a founder whose last startup failed last quarter, a contractor whose license was revoked recently — none of this is in the model's weights. The model will describe the person as they were, not as they are.

Confident confabulation. LLMs don't know what they don't know. They'll generate plausible-sounding background information even when their training data is sparse or contradictory. The result looks authoritative and may be substantially wrong.

No source verification. When a general-purpose AI tells you a founder "previously built [company]," there's no mechanism to verify whether that's accurate. The claim exists in generated text, not in a cited source.

Fixed scope. What an LLM knows about a person is limited to what appeared in its training corpus. Public records that weren't widely covered, older court filings, regional news coverage, multimedia content — much of this isn't in the training data.

[UNIQUE INSIGHT] We've run tests comparing AI Investigator dossiers against "ask ChatGPT about this person" outputs for the same subjects. In every case where we had ground truth to verify against, the retrieval-augmented approach surfaced more accurate and more current information. The generation-based approach produced plausible narratives that included factual errors in roughly 40% of cases involving less-public individuals.

How Retrieval-Augmented Background Research Works

The AI Investigator pipeline is retrieval-first, not generation-first. Before any synthesis happens, the system retrieves actual current sources from the web.

Here's the sequence:

Step 1: Query generation. The subject name, company, and context are used to generate a structured set of search queries covering different aspects: professional history, company affiliations, legal records, news coverage, social profiles, multimedia content.

Step 2: Source retrieval. Queries run against multiple source types in parallel — web search, news archives, court record aggregators, social platforms (public content only), video platforms (YouTube, podcast hosts), and business registries. The system fetches the actual source content, not just links.

Step 3: Content extraction and preprocessing. Retrieved content is cleaned, structured, and prepared for analysis. HTML is stripped. Navigation and boilerplate are removed. For multimedia sources, audio is transcribed to text before entering the analysis pipeline.

Step 4: Claim extraction. The AI reads each retrieved source and extracts specific factual claims: dates, roles, companies, events, statements. Each extracted claim is tagged to its source.

Step 5: Cross-source triangulation. Claims from multiple sources are compared against each other. If three sources say a person was CTO of [company] from 2019-2023, that's confirmed. If one source says founder and two sources say VP-level hire, the discrepancy is flagged rather than resolved by guessing.

Step 6: Dossier synthesis. Confirmed and source-cited claims are assembled into the structured dossier format: executive readout, verified findings, source trail. Claims that couldn't be verified across multiple sources are flagged with their confidence level rather than presented as fact.

[PERSONAL EXPERIENCE] The triangulation step is where we spent the most engineering time. The naive approach — extract claims from sources, summarize — produces results that look clean but overstate confidence. Real-world public records are frequently contradictory: LinkedIn profiles don't match press releases, founding team descriptions change over time, people misremember or misrepresent dates. The system has to handle disagreement, not paper over it.

Multimedia Transcription: The Layer Traditional Services Miss

This is the component that differentiates AI background research most clearly from anything traditional screening services do.

YouTube conference talks, podcast interviews, speaking appearances, video demos — these represent primary source material about how someone describes their own work and background. They're often the most candid source available, because people in interview contexts are less careful than they are in written materials.

In our pipeline:

- Audio files and video content are identified during the retrieval phase

- Audio is transcribed using speech-to-text processing

- Transcripts enter the same claim-extraction and triangulation pipeline as text sources

- What someone said in a 2022 conference talk is treated with the same weight as what they wrote in a press release

This matters for background research because significant discrepancies between claimed experience and publicly stated history show up most often in multimedia sources. What someone told a podcast host two years ago, before they needed to impress this particular employer or investor, is often the ground truth.

See the use case: How an HR lead uses podcast transcription in pre-employment research

Why "Fast Background Check" Is a False Promise

There's a tradeoff between speed and coverage that most background check marketing obscures. A background check that returns results in two minutes has searched two minutes' worth of sources. A thorough investigation takes longer because thoroughness takes longer.

A 2024 Stanford HAI report on AI reliability found that verification latency — the time needed to cross-check claims across multiple sources — is one of the key factors separating high-accuracy AI research outputs from low-accuracy ones. Cutting that time cuts accuracy.

Our target for a complete AI Investigator dossier is 15-25 minutes. That's not a limitation — it's a deliberate calibration. Less time means fewer sources retrieved, fewer claims triangulated, and lower confidence in what the dossier presents.

What you want from a fast background check is a fast start to a thorough investigation, not a fast substitute for one. The difference is whether you're looking for a quick signal or making a real decision.

[ORIGINAL DATA] In internal testing across 200+ background investigations, we found that dossiers completed in under 10 minutes had a 35% higher rate of incomplete coverage on multimedia sources compared to those completed in the 15-25 minute range. The gap in criminal record coverage across non-standard jurisdictions was similar. Speed correlates inversely with depth.

The Output: Structured Dossier vs Unstructured LLM Response

The format of the output matters as much as the process that produces it. A general-purpose LLM asked about a person produces a paragraph or a list — text that's organized by the model's sense of relevance, with no attribution.

An AI Investigator dossier is structured:

Executive readout. A 2-4 sentence summary of the overall picture — who this person appears to be, what the high-level flags or confirmations are.

Verified findings. A list of specific, source-cited findings. Each one names the claim and the source. "Founded [company] in 2019 — Source: TechCrunch, March 2019." Not "appears to have founded."

Discrepancies and flags. Items where sources disagree or where claims couldn't be confirmed. Presented as flags, not assertions.

Source trail. The complete list of sources consulted, with URLs. This is the audit trail — every claim can be verified directly.

This structure matters practically. When an investor is reviewing a founder before a call, or an HR team is reviewing a candidate before an offer, or a consultant is reviewing a vendor before a recommendation, they need defensible findings. "The AI said so" isn't defensible. "Here's the court record URL" is.

For a practical walkthrough of what this looks like in an investment context, see how Liam Carter uses AI Investigator before every founder meeting.

What This Architecture Doesn't Solve

Worth being direct: retrieval-augmented generation improves accuracy substantially, but it doesn't eliminate all limitations.

Source quality limits output quality. If a subject's public record is thin — a private individual with minimal online presence — retrieval will return fewer sources and the dossier will reflect that. We surface confidence levels rather than filling gaps with inference.

Non-indexed content. Sealed court records, private databases, information behind paywalls, and content that search engines haven't indexed are outside our reach. We cover what's publicly accessible.

Timeliness. We retrieve current sources, but web indexing lags. Content published in the last few hours may not be searchable yet.

These limitations are why AI Investigator is designed as an open-web intelligence tool — not a replacement for FCRA-regulated consumer reports or specialized legal databases, but a deep complement to them.

Get early access to AI Investigator to see the full pipeline in action.

Complete Guide: AI Background Check & People Intelligence: The Complete Decision-Making Guide

Across 8+ years, I've built full-stack and platform systems using TypeScript, Node, React, Java, AWS, and Azure, applying AI to practical problems and turning ambitious ideas into shipped products.